In Swift, both Float and Double are used to represent decimal numbers.

The difference between a Float and a Double is in the precision:

- The precision of a Double is at least 15 decimal places.

- The precision of a Float can be as small as 6 decimals places.

As a rule of thumb, you should use a Double in Swift.

Swift Defaults to Doubles

By default, Swift uses Double when it faces a decimal number in the code.

You can see this by creating a variable that represents a decimal number without specifying the data type.

For example:

var decimal = 5.2319 print(type(of: decimal))

Output:

Double

As you can see, the type of the variable is Double automatically.

The only way to make this number a Float is by explicitly setting the data type as Float.

For instance:

var decimal: Float = 5.2319 print(type(of: decimal))

Output:

Float

Swift defaults to using Double because it is the more accurate data type in comparison to Float.

To understand the difference in accuracy, let’s see the values of Pi as a Double and as a Float.

print(Double.pi) print(Float.pi)

Output:

3.141592653589793 3.1415925

As you can see, you get a more accurate value for Pi as a Double in comparison to Float.

- The Double Pi has 15 digits accuracy

- The Float Pi only has a 7 digits accuracy.

If you are dealing with matrices in your Swift code, you might want to keep the entries as accurate as possible.

This is because sometimes even a small deviation in the input can produce an entirely different output. (See the condition number.)

But if Float is the less accurate data type for representing decimal numbers, why would you ever use it?

When Use Floats in Swift?

As a rule of thumb, you should use Doubles in Swift.

However, it is worth noting a Double takes more space than a Float due to the higher accuracy.

For instance, let’s compare the memory footprint of Double.pi and Float.pi using MemoryLayout:

print(MemoryLayout.size(ofValue: Double.pi)) print(MemoryLayout.size(ofValue: Float.pi))

Output:

8 4

As you can see, the Double representation of Pi eats 2 times more memory than Pi as a Float.

So the only two reasons you would want to use a Float in Swift is when:

- You do not care about accuracy and want to optimize memory consumption.

- A custom type requires you to use a Float.

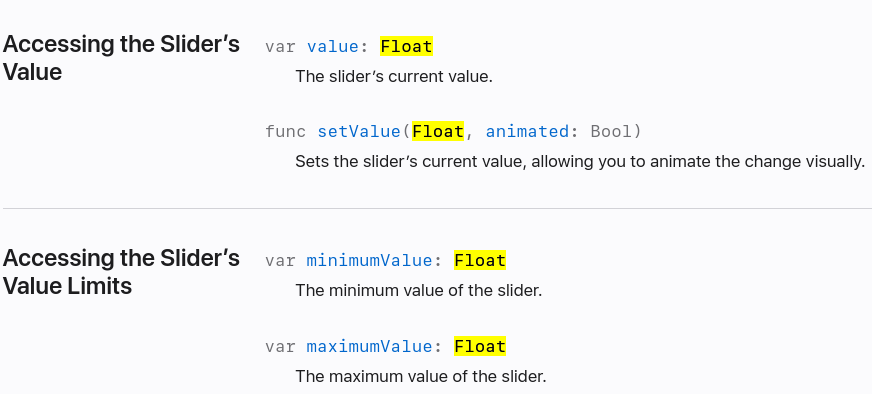

A great example of the latter point is when dealing with UISliders.

It does not make sense for a slider to be extremely accurate. Thus the UISlider type’s value is implemented as a Float instead of a Double.

Conclusion

Today you learned what is the difference between a Double and a Float in Swift.

To recap, Float is less accurate than Double.

- Float precision starts from only 6 decimal places.

- Double precision is at least 15 decimal places.

Swift enforces you to use Doubles instead of Floats.

You should use Float only if you want to sacrifice precision to save memory. Also, if you are working with types that require Float, then you have to use a Float. For example, UISlider values are Floats.