Artificial Intelligence or AI mimics the human intelligence process by computers.

AI has become one of the 21st-century buzzwords. Even though the term Artificial Intelligence sounds cool, it’s nothing but maths and probabilities behind the scenes.

Conceptually, artificial intelligence is nothing new. The first neural network propositions were made back in 1940.

But thanks to the latest advancements in computational power, the theory has become a reality.

But why does AI have so much hype? Will AI take over? How does AI work? What types of AI are there?

This is a comprehensive guide on what is Artificial Intelligence. After reading this guide, you have a better understanding of why AI is significant for humans. More importantly, you understand what AI means and how it works. You will also learn what types of AIs there are as well as what are the most popular applications of AI.

What Is Artificial Intelligence (AI)?

Artificial Intelligence or AI simulates the human thinking process by a computer or other machines. The goal of AI is to build computer programs that are capable to replicate the problem-solving and decision-making skills we humans possess.

Under the hood, AI is a fancy word for using linear algebra to analyze data and make predictions.

The main characteristic of an AI is that the programs have the ability to learn from labeled data. When AI receives an input, makes a prediction based on the data it has analyzed in the past.

During the past couple of years, the use of AI has increased rapidly. This has led to impressive applications, such as self-driving cars, facial recognition systems, recommendation engines, and much more.

Unlike you might think, artificial intelligence is not as mythical and magical as it sounds. Before digging deeper into how AI works, let’s take a look at an example of an AI program.

Example Artificial Intelligence Program

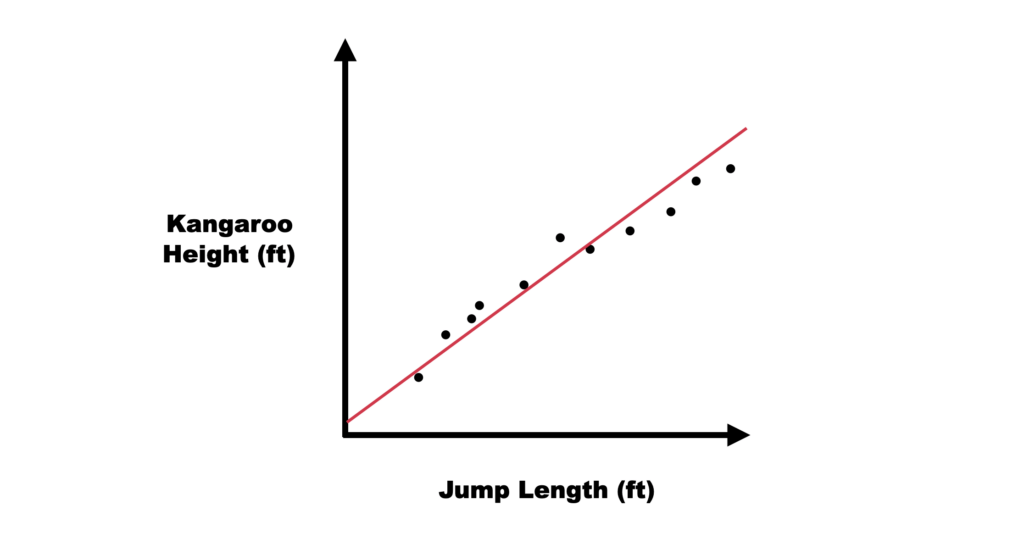

A simple example of an AI program is a model that predicts how far a kangaroo can jump given its height. To make this program work, you have to:

- Measure the heights of a bunch of kangaroos.

- Measure the jump lengths of the kangaroos.

- Use basic linear algebra to find the correlation between the kangaroo height and the length of the jump.

Mathematically speaking, the last step means finding the slope of a graph in an x-y plane. It is the learning phase of the program.

Once the program has analyzed the data it can take the height of a kangaroo as an input and calculate the jump length as an output.

The above program is an example of machine learning, which is a subfield of AI. To build this kind of program, you need basic programming skills and some high school maths.

Of course, based on this description, you cannot just go and implement such a program. But I wanted to show you this to demonstrate that AI is truly nothing but maths behind the scenes.

Understanding Artificial Intelligence

Most people think about robots when they hear the term “Artificial Intelligence”. This is because movies and novels are full of stories where AI robots have taken over the world.

This is far from the truth.

AI is nothing but a mathematical computer program that handles and analyzes data.

The concept of AI is based on the idea that a computer can easily mimic human intelligence processes.

This varies from simple tasks to more complex ones.

Earlier on, you saw an example of predicting the jump of a kangaroo based on its height. This is a simple AI program that is relatively easy to create.

But there are much more complex tasks that AI might be capable of performing.

Recently, AI developers and researchers have been able to mimic human brain activities, such as:

- Learning

- Reasoning

- Perception

As a matter of fact, it is already possible to define these activities concretely.

Due to the rapid developments in AI, many experts believe that in the near future, humans can develop an AI that exceeds the human brain’s capacity.

But because all cognitive activity is based on value judgments and is highly subjective, others believe AI will never surpass humans.

Only time will tell whether this is true or not.

Because AI is a broad concept, it has been split into a number of subfields.

Next, let’s take a look at the most notable subfields of AI, some of which you may have heard of.

The Subfields of AI

Artificial Intelligence is a broad concept. Similar to how the human brain has multiple functions, AI has a lot of subfields and studies.

The main subfields of AI are:

- Machine Learning

- Deep Learning

- Neural Network

- Cognitive Computing

- Natural Language Processing

- Computer Vision

I’m sure you’ve heard at least some of these terms before. Similar to AI, the names of the subfields of AI are tossed around quite frequently.

Notice that the boundaries between these subfields are not clear.

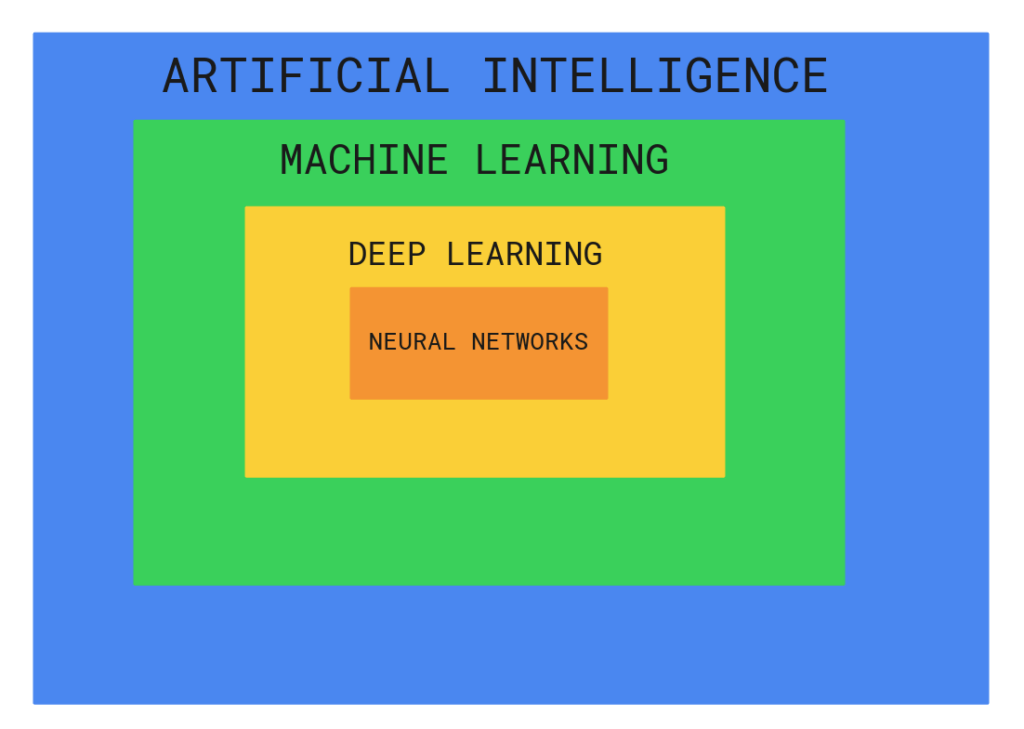

Before digging deeper into the details of these branches of AI, take a look at the image below. This image illustrates the relationship between AI, Machine Learning, Neural Networks, and Deep Learning:

As you can tell, AI is the governing body of machine learning which governs deep learning, which governs neural networks.

Next, let’s take a closer look at these subfields of AI.

1. Machine Learning

Machine Learning (ML) is a popular branch of artificial intelligence. This field of study focuses on using data and algorithms to mimic the human learning process.

Machine learning programs learn from data and the accuracy of the programs improves gradually.

The kangaroo jump length example is a perfect demonstration of machine learning. The computer program is able to figure out how the kangaroo’s height affects its jump length.

How Does Machine Learning Work?

Generally speaking, the machine learning process starts with data observations. The observations can be anything, such as:

- Examples

- Direct experience

- Instructions

The machine learning algorithms start looking for patterns in this data. Later on, the machine learning program can use the data and patterns to make inferences.

The key function of any machine learning program is to automate the computer learning process. A successful machine learning program can learn from data without human intervention or assistance. Furthermore, it can perform actions based on the learnings.

The concept of machine learning has been around for a while. The term was coined by Arthur Samuel who is an IBM computer scientist. Samuel created an AI program that could play chess.

Samuel designed the program to learn from the previous games. This way, the more the program played, the more experience it gained, and the better it became.

This was the first application of machine learning as we know it.

Machine learning is an important concept and subfield of AI. A machine learning program can solve problems much faster than any human could. Thanks to the computational power of modern-day computers, machines can easily identify patterns in the input data to automate manual processes.

Even though machine learning is a subfield of AI, it’s still a broad concept. To make a computer program learn, there are many strategies to choose from. The most popular machine learning methods include:

- Supervised learning

- Unsupervised learning

- Semi-supervised learning

To keep it in scope, we are not going to take a deeper look at these.

Even though machine learning might sound like fiction, it’s not. Machine learning is used in businesses all around the world across different industries. Some of the notable technologies and industries that utilize machine learning to improve their products include:

- Data security

- Finance

- Health care

- Fraud detection

- Retail

Next, let’s take a look at a popular subfield of machine learning called deep learning.

2. Deep Learning

Learning by example comes naturally to us humans. But a computer doesn’t understand what is learning.

Deep learning is a machine learning subfield. Deep learning algorithms teach computers how to learn by example.

One of the most popular applications of deep learning is self-driving vehicles. The deep learning algorithms that run behind the scenes can recognize objects on the road, such as:

- Road signs

- Pedestrians

- Traffic lights

- Traffic lanes

- Other vehicles

Also, modern-day voice control systems are powered by clever deep-learning algorithms. Deep learning has gained a lot of momentum in recent years. This is all thanks to the achievements that weren’t possible a short while ago.

How Does Deep Learning Work?

A typical deep-learning program has the ability to make classifications from images, sounds, or text. The deep learning algorithm analyzes a large collection of training data with multi-layered neural network architecture.

Deep learning was theorized back in 1980. It’s not a new concept. But thanks to the latest advancements in computing power, the theory has become a reality.

Deep learning is computationally heavy. Deep learning algorithms need lots of labeled data to work with. For instance, to train a deep learning algorithm to drive a car, it has to analyze millions of images from the road.

These days, GPUs have much higher performance than in the 1980s. Thanks to this, developers can write efficient deep-learning algorithms whose training time is hours instead of weeks or months.

Commonly, deep learning algorithms use neural networks (or deep neural networks). Let’s inspect how neural networks work.

3. Neural Networks

Neural Network mimics the behavior of the human brain in pattern recognition. The neural network simulates the way biological neurons communicate with one another in the brain.

As you learned earlier, the neural network is a subset of deep learning. A neural network forms the basis for a deep learning algorithm.

How Does Neural Network Work?

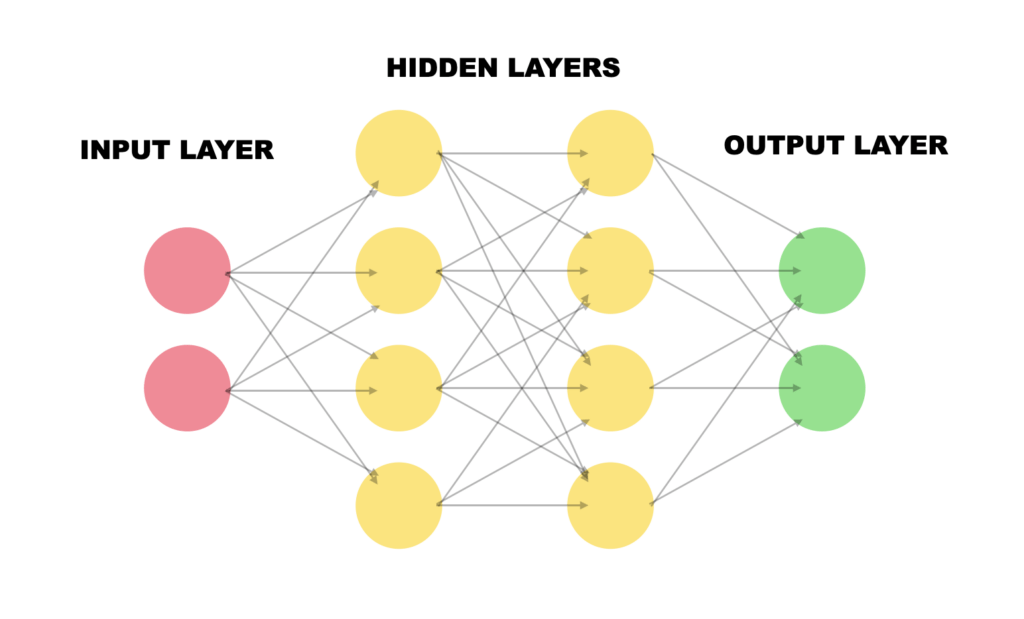

A neural network is formed by:

- An input layer

- One or more hidden layer

- An output layer

The layers consist of nodes that can be treated as artificial neurons. All the nodes are connected to all the nodes in the next layer. Each node is also associated with a weight and a threshold.

If the output of a node exceeds the threshold, it gets activated and sends data to the next layer.

This process is reminiscent of the learning activity that takes place in our brains all the time.

When training a deep learning algorithm, the developer runs the training data through a neural network. The algorithm learns to classify the training data with neural network activations. The model’s accuracy improves gradually.

To keep it in scope, we are not going to dig deeper into the maths of neural networks. Now you should have a basic level of understanding of how machine learning, deep learning, and neural networks work.

The following sections teach you about other relevant subfields of AI, starting with Cognitive Computing.

4. Cognitive Computing

Cognitive computing means using computer models to mimic the human thought process in uncertain situations. This subfield of AI aims to make computers understand natural language and make object recognition possible. The programs mimic human brain behavior.

Cognitive computing is a mix of other subfields of AI. To achieve the cognitive computing goals, the following technologies and subfields of AI are used:

- Neural Networks

- Machine Learning

- Deep Learning

- Natural Language Processing

- Speech Recognition

- Object Recognition

- Robotics

And more.

When these are combined with clever self-learning algorithms, pattern recognition, and proper data analysis, the basis of cognitive computing is founded!

Cognitive computing is useful in a broad range of industries, including risk assessment, speech recognition, face detection, and many more.

How Does Cognitive Computing Work?

Similar to the other subfields in AI, cognitive computing uses self-learning algorithms that require a ton of structured or unstructured data. The data is fed to a machine learning algorithm that can learn patterns in the data. These cognitive systems have the ability to refine the data processing and pattern identification process.

As an example, you can teach an AI to recognize pictures of dogs. To make this type of cognitive system work, you must have tons of pictures of dogs. The more dog images the system sees, the better it becomes at recognizing them.

For a cognitive system to achieve the previously described capabilities, it must be:

- Adaptive and flexible to be able to learn even when the information changes.

- Interactive so that the users can interact with the cognitive systems to define their needs when they change. Besides, the systems must interact with other systems, devices, and platforms.

- Iterative to ask questions and take in additional data to make sense of a new problem.

- Stateful to retain information about similar situations in the past.

- Contextual to understand the context of the problem. A successful cognitive system understands, identifies, and mines contextual data. This could mean tasks, goals, location, domain, syntax, or something similar. The data can be drawn from multiple sources and it can be structured or unstructured.

5. Natural Language Processing

Natural Language Processing or NLP is a popular AI subfield. NLP makes it possible for a computer to process text and spoken language the way humans do. This allows for natural language interaction between a computer and a human.

NLP is a combination of:

- Computational linguistics

- Statistical models

- Machine learning models

- Deep learning models

When combined, these technologies make computers understand the full meaning of the text or spoken language. The NLP system is capable of sensing the speaker’s intention and sentiment too!

NLP is particularly useful and commonly used in:

- Translations

- Responding to spoken commands

- Summarizing huge amounts of text quickly

Chances are you have interacted with an NLP system in the past. For example, all text-to-speech programs use NLP behind the scenes. Also, many websites have automated chatbots. These are powered by NLP algorithms that aim to understand the client’s intent.

How Does Natural Language Processing Work?

Human language is ambiguous in a lot of ways. To accurately predict the intent of text or spoken language, one must understand:

- Sarcasm

- Idioms

- Metaphors

- Grammar exceptions

- Usage exceptions

- Sentence structure variations

- Homonyms

- Homophones

And more.

To build a successful natural-language-driven software, it must be capable of understanding each of these irregularities. Here are popular NLP approaches that split the text into logical parts to help the system understand it better.

- Speech recognition. This strategy converts voice into text. Any piece of software that answers spoken questions or follows spoken commands must employ this strategy. This is a big challenge because humans talk in irregular ways. Some speak quickly, some speak slurringly, and some emphasize words differently. Also, people have different accents and use slang terms and otherwise incorrect grammar.

- Grammatical tagging. This strategy determines the type of a word based on context. For example, the word “make” is a verb in the context of “I can make a sandwich”. On the other hand, the word “make” is a noun in the context of “What make of car is that?”.

- Word sense disambiguation. This is a semantic analysis approach to help with words with a double meaning. It determines the word that makes the most sense given the context. For example, “make the grade” means to “achieve”. But “make a bet” is the same as “place”.

- Named entity recognition. This technique picks up useful words and phrases from the text. For example, it can determine that “Helsinki” is a location or “Nick” is a name of a man.

- Co-reference recognition. Co-reference recognition is used to understand when two words mean the same thing. The most popular example is determining to which person a pronoun refers. For example, “Mary is my wife. She made me breakfast”. In this sentence, the co-reference recognition is used to determine that the word “She” refers to “Mary”.

- Sentiment analysis. This is an approach to understanding sarcasm, emotions, suspicion, confusion, and attitudes from the text.

- Natural language generation. This is the reverse process of text-to-speech. Natural language generation takes structured information and converts it to human language.

6. Computer Vision

Computer vision is a key subfield of AI that makes computers extract information from images, videos, and other types of visual inputs. More importantly, computer vision systems can take action or offer recommendations based on the extracted information. For example, self-driving cars use computer vision to make their way.

To put it simply, AI makes computers think and computer vision makes them see and observe.

Similar to the other subfields of AI, computer vision mimics the human brain’s behavior of observing things. Also, to train computer vision, a lot of data is needed.

To build a successful computer vision, you need to feed the computer vision algorithm a lot of data. The algorithm then runs rigorous data analysis on the data until it starts to recognize objects from images.

As an example, to train computer vision to recognize traffic lights, the system needs thousands of images of traffic lights. Besides, it needs to learn to tell the difference between a traffic light and a light post, for example. Thus, the system also needs to ingest a lot of images of traffic light-related objects.

How Does Computer Vision Work?

To build a successful computer vision program, there are two main technologies being used:

- Deep Learning

- Convolutional Neural Networks (CNN)

Deep learning algorithms allow the computer to teach itself about the context of an image or video. Once the system has digested enough data, the system can see the data and start distinguishing images from one another. The key is that once the system is up and running, there is no need for a human to program the rules for recognizing the images. Instead, it’s the machine learning system that teaches itself.

To learn from images, a convolutional neural network (CNN) is used.

The CNN assists the deep learning model to “see” the images. It works by splitting an image into pixels that are labeled in a particular way. The algorithm then uses the labeled pixels to predict what it sees in the image.

The CNN works by applying a mathematical operation called convolution to the pixel data repeatedly. Once the convolution is applied, the program checks the accuracy of its predictions. When repeated enough, the predictions start to become accurate and the program can see objects the way we humans do.

Types of AI

In the previous section, you learned the subfields in the study of AI.

Now it’s time to take a look at the types of AI based on capabilities and functionalities.

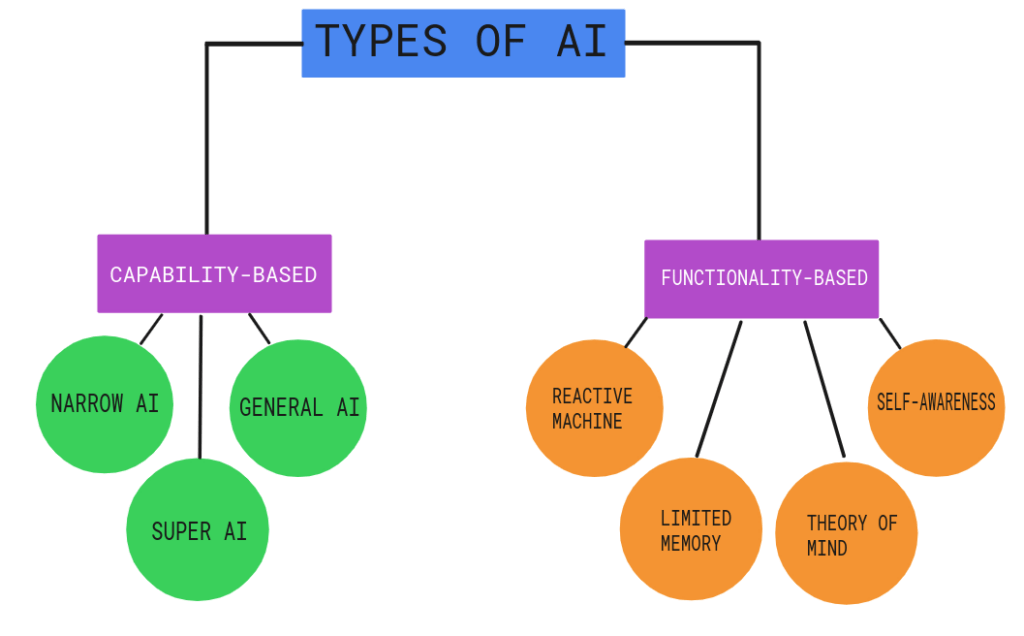

Based on capabilities, all the AI programs can be split into three categories:

- Narrow AI

- General AI

- Super AI

And when it comes to functionalities, AI can be split into the following four categories:

- Reactive Machines

- Limited Memory Machines

- Theory of Mind

- Self-Aware AI

Let’s take a closer look at these types of AIs starting from the capability-based AI types.

AI Types Based on Capabilities

1. Narrow AI

Narrow AI, or Artificial Narrow Intelligence (ANI) can only focus on a single task.

All the AI solutions today are examples of narrow AI. Google Translate, Apple Siri, or Self-Driving cars are great examples of narrow AI.

These systems can only perform one type of task. The narrow AI systems cannot do actions beyond their limitations.

Thanks to the latest advancements in the field of AI, narrow AI is becoming increasingly popular in our everyday lives. Notice that the word “narrow” is typically omitted. Instead, people just talk about AI when they actually refer to the narrow AI.

2. General AI

General AI, or Artificial General Intelligence (AGI), is an AI that can learn any task that a human can. A general AI knows how to apply knowledge and skills in a variety of contexts.

There exists no general AI in the world. AI researchers and developers haven’t been able to create one yet.

To build a general AI, the AI engineers would need to make machines conscious and code a full-on cognitive ability set to the system.

Even though this might sound like SciFi, a lot of money is spent on the research and development of general AI. For example, Microsoft invested $1B in OpenAI for developing general AI.

Also, researchers have used the Fujitsu K Computer to simulate neural activity in an effort to achieve strong AI. Fujitsu K is one of the fastest supercomputers in the world. But still, it took 40 minutes to simulate one second of neural activity. With results like this, it’s hard to tell whether general AI will be accomplished soon or not.

3. Super AI

Super AI or Artificial Superintelligence (ASI) will outperform humans in the intelligence process. Super AI systems will be capable of doing everything better than humans do.

In super AI, the memory is greater, data processing is faster, and decision-making is more reliable compared to humans.

The moment in time when we reach the super AI is commonly called a singularity.

Even though super AI sounds appealing, it might also change the way we live for better or worse. Needless to mention, a super AI could also be a threat to humanity’s existence.

Luckily, there’s still a lot of time to focus on AI safety not to build a system that ends humanity.

As a matter of fact, no one really knows if we will ever reach super AI. At this point, we haven’t even reached the general AI yet.

AI Types Based on Functionalities

1. Reactive Machines

A reactive machine is a rudimentary type of AI. A reactive machine doesn’t store information. Thus, it cannot learn from past experiences.

A reactive machine can only make a prediction based on the present data. A reactive machine works by giving it a specific set of tasks. A reactive machine doesn’t have the capabilities in executing tasks beyond the limited set of instructions given to it.

A great example of a reactive machine is IBM’s Deep Blue. This system won a chess match against the grandmaster Garry Kasparov. The Deep Blue only sees the chessboard piece arrangement and reacts to it. The system doesn’t remember earlier moves, thus, it doesn’t improve over time.

The is capable of identifying the pieces on the chess board. Besides, it knows all the possible movements for each piece.

The Deep Blue reactive machine makes a prediction about the next moves for itself and the opponent. But it doesn’t hold any information about any earlier move.

2. Limited Memory Machines

A limited memory machine is an AI that uses data from the recent past to make decisions/predictions.

As the name suggests, the memory of a system like that is limited. The idea of a limited memory machine is that the memory is short-lived. It can be saved for some period of time, but there is no central data storage to permanently save it.

Limited memory machines are used when the immediate past is needed to make quick decisions. A great example of a limited memory machine is self-driving cars. A self-driving car works as follows:

- The limited memory AI makes observations on other vehicles moving around it.

- The data, such as lane markers, speed limits, and traffic lights are stored temporarily in the machine.

- This temporary data is used to help change lanes, avoid hitting other vehicles, and stop at red lights.

If you think about it, a self-driving car doesn’t need to know what car was driving ahead of 5 miles ago. It only cares about the present and the immediate near future. This is why there is no need to store the traffic data in a bigger system. All the vehicle needs are to know what happens around it now.

3. Theory of Mind

Theory of Mind AI is a conceptual AI that refers to a sophisticated class of technology. The theory of mind AI has a deep understanding of feelings and behavior and how the environment and people affect it.

A theory of mind AI is capable of understanding the emotions of people. Also, it understands our sentiments and thoughts thoroughly.

Even though the theory of mind lives as a concept, there are many implementations that try to accomplish the theory of mind AI.

One such example is Kismet. An MIT researcher built this robot head back in the 1990s. The robot has the ability to mimic human emotions. This robot can also recognize the emotions of humans surrounding it. These are amazing achievements that take us a step closer to achieving the theory of mind AI. But Kismet cannot follow gazes nor does it shift attention to humans.

4. Self-Aware AI

As the name suggests, the self-aware AI is completely aware of itself.

Naturally, this type of AI doesn’t exist yet and it might be decades or even centuries away from us.

The self-aware AI behaves so much like the human brain that it has developed self-awareness. A self-aware AI is the ultimate goal of all AI research.

A self-aware AI will have emotions, beliefs, needs, and desires.

The doomsayers are well aware of this type of AI. Even though self-aware AI could vastly improve our society, it could also lead to a disaster. What if the self-aware AI is smarter than us and decides to get rid of us?

Luckily, there is a lot of time and learnings to be made before the self-aware AI is a reality. Perhaps by then, the researchers have come up with such a safe AI that the self-aware AI doesn’t become our enemy.

So far you have learned how AI works, what are the main subfields of AI, and what are the different types of AI. Even though you have seen a bunch of real-life examples of AI, let’s discuss how AI is important to us.

Why Is Artificial Intelligence Important to Us?

With AI, humans are capable of automating the learning and data analysis process.

When there are loads of data, it would be infeasible for a human to analyze it all and learn features from it. This is where AI helps.

AI allows businesses and researchers to gain valuable insights from data.

Because AI works on a computer, there is basically no fatigue. More importantly, the data discovery process is reliable.

Even though AI is revolutionary in some sense, we still need humans to ask the right types of questions. Furthermore, we need humans to code AI programs to discover the answers to our questions.

AI is typically used to add intelligence to existing products or solutions. It’s rare to have a solution that is completely AI-based.

For example, the new generation of Apple products uses Siri, which is an AI-based voice control feature. Without Siri, Apple products would still be more than impressive, but Siri makes using the products even more streamlined.

Applications of AI

There are countless applications of AI. Here is a list of the most notable AI applications across the biggest industries.

1. E-Commerce

AI is used in many areas of e-commerce.

For example, AI is used in personalized shopping to create recommendation engines for better engagement with customers. The recommendations are made by using the preferences, interests, and the visitor’s browser history.

Another popular e-commerce application of AI is chatbots and AI shopping assistants.

These tools use natural language processing to make the human-computer interaction as smooth and streamlined as possible. Virtual assistants have the ability to interact with customers in real-time. Sooner or later, most of the customer service is handled entirely by AI assistants.

2. Education

Education is known for being heavily influenced by humans. In the recent past, AI has started to make its way to the education space too. This has led to better productivity as educators need to spend less time on mundane office tasks and focus more on the students.

One of the notable uses of AI in education is automating non-educational tasks. These include duties, such as grading, paperwork, arranging parent interactions, issue feedback facilitation, and managing courses and other HR-related topics.

Creating smart educational content is also easier these days, thanks to AI. For instance, it’s easy to add AI-powered animations, text-to-speech, and other similar enhancements to the study materials. This leads to increased productivity and higher engagement.

Besides, the student’s progress can be tracked and analyzed better by using AI. This leads to personalized learning that helps individual students based on their desires and learning goals.

The AI can analyze habits, plans, reminders, notes, and other important factors to make better decisions for teaching the student.

3. Lifestyle

The coolest AI products are the ones that affect our lifestyles.

One of the key uses of AI in our lifestyle is the rapidly growing field of self-driving vehicles. Companies like Tesla, Audi, and Toyota are heavily investing in making vehicles autonomous.

This happens with the help of AI. More specifically, the vehicles have an embedded computer that uses machine learning algorithms to autonomously drive the vehicle.

Another more down-to-earth lifestyle application of AI is spam filters. There is a whole bunch of junk emails we receive every day. To avoid binning useful emails while keeping the inbox clean, the spam filter must make intelligent decisions. This is why the best spam filters use AI to classify the emails in the inbox.

The third application worth mentioning is facial recognition. This technique is used to improve secure access to mobile devices and laptops.

The challenge of facial recognition is to detect the face in different lighting, clothing, and facial expressions. To develop a reliable facial recognition system, the engineers build clever AI algorithms to accurately recognize a face.

4. Robotics

It’s probably not a surprise that AI is widely used in robotics. To make a robot move in a public space, it has to be well aware of its surroundings. Thanks to AI, the robots have the ability to see the space they’re moving in. This makes it possible to make plans and changes to the journey on the spot.

Robots are used to assist humans in many facilities. For example, a robot can carry goods in factories and warehouses or even hospitals.

Some robots are coded to clean office spaces.

Inventory management is also heavily invaded by management bots that do the low-value repetitive manual work for us.

5. Human Resource

These days the hiring process can be automated from candidate sourcing all the way to the interview process.

Instead of searching for talent manually, the HR teams use AI-powered solutions to do the work. This helps companies make the hiring process more cost-efficient and effective. Instead of going through a handful of talent profiles, AI-based solutions have millions of profiles.

Furthermore, the AI helps match potential employees by taking into account factors like:

- Job experience

- Education

- Values

- Location

- Availability

And much more.

6. Healthcare

The healthcare sector needs AI in a variety of applications.

For example, AI can be used in detecting diseases and identifying cancer cells. AI-based healthcare solutions use lab data or other types of medical data (such as images) to make an early diagnosis.

7. Agriculture

You might be surprised that AI has made its way to agriculture too!

With AI-based computer vision programs, it’s easier to detect nutrient deficits and defects in the fields.

Also, AI robots can harvest the crop at a much faster pace and in greater volumes than human workers.

8. Gaming

You may already know that the gaming industry relies on game developers that write code to make the games.

To build an online game, there is no need to use AI. But to create realistic human-like NPCs, AI can offer tremendous help.

Also, AI can help game developers improve the game by analyzing and predicting human behavior in the game.

9. Social Media

When you are searching through social media, you may have realized how accurate suggestions the platforms make.

Youtube recommends videos that you likely want to see. Also, the search suggestions are scary and accurate even when you have typed only a few characters.

Besides, AI helps make better translations in social media posts.

Similar to how spam filtering uses AI to classify messages, social platforms use AI to detect hateful and explicit content.

10. Marketing

In marketing, AI helps deliver highly personalized and targeted ads. This is made possible by rigorous behavioral analysis, pattern recognition, search history, location, and more.

Another way to use AI in marketing is via chatbots. The chatbots use natural language processing to create human-like messages for higher engagement and improved user experience. These days, chatbots are really clever and can carry out basic tasks in an instant without human intervention.

FAQ

Last but not least, let’s take a look at some common questions related to Artificial Intelligence.

Does Artificial Intelligence Involve Coding?

Yes, AI is all about coding.

To build an AI program, there has to be code somewhere behind the scenes.

When I first heard about AI, someone told me “It’s some magic where computers learn stuff and no coding is needed”.

This is partially true because AI programs indeed mimic the human learning process. An AI program can make decisions similar to how we humans do instead of “hard-coding” the rules to the program.

But to create an AI program, a software developer must write some code like in any other computer program.

Machine Learning vs AI

Machine Learning or ML is a subset of Artificial Intelligence. So the field of Machine Learning is governed by the concept of AI. This means every Machine Learning application is also an AI solution.

You will commonly see the terms machine learning and artificial intelligence used interchangeably.

Notice that machine learning is a subfield of AI, deep learning is a subfield of machine learning, and neural networks is a subfield of deep learning.

Will AI Take Over?

It’s impossible to say.

If AI capabilities exceed the human brain, it’s hard to tell what will happen.

The most dystopic scenario is that AI realizes humans are only harmful and they decide to get rid of us.

But equally as likely AI can treat humans as friends. In this case, the AI systems will do everything to keep us alive and feel as comfortable as possible.

Also, there is a chance that the rapid development in AI slows down or reaches a wall, such that a conscious AI will never be created.

Luckily, things are still in our control. At the end of the day, it’s the humans that decide where they want to take the AI capabilities. Hopefully, we never cross the point of no return!

At the current rate of development, amazing and unbelievable things are about to happen in the coming years, that’s for sure!

When Was AI Invented?

The concept of AI is not a new thing. The earliest theories of AI-based solutions came into existence back in 1940.

But AI remained a concept for a long time because there was not enough computational power to put it into reality.

These days, AI is developing faster than ever before. It seems as if new solutions pop up every day.

Why Does It Seem That Every Company Uses AI These Days?

Using AI is not as difficult or magical as it sounds. Remember, AI can be something really simple, such as a linear regression model to predict how far kangaroos jump. All in all, AI is just maths, probabilities, and data analysis.

Depending on the business, using AI can streamline some processes and help cut costs. But more importantly, adding a small piece of AI to the business logic makes a media-sexy headline.

How to Try AI?

To feel the power of AI, all you need to do is open up your mobile device’s voice control system and give it some instructions.

The speech-to-text system that powers the voice control systems is a common example of using AI.

Another really common use case for AI is automated chatbots. Especially software companies tend to use AI chatbots a lot. When you enter a SaaS company website, chances are a chatbox will pop up. When you ask a question, the AI chatbot service uses NLP to determine the intent of your message and to find an answer.

Is AI Hard to Learn?

Learning AI isn’t hard. But it takes time and requires a lot of practice.

To learn AI, you need to learn two things:

- Programming. A typical language that is used in AI programs is Python, which is a beginner-friendly language.

- Maths. You will also need to know some linear algebra and probabilities to build AI programs.

In case you are interested in AI, you can start by learning how to code in Python. Here is an article that guides you on how to learn Python efficiently. But remember to be patient. Learning coding or AI doesn’t come overnight. You have to spend months before being able to build something significant :).

Wrap Up

Today you learned what is artificial intelligence.

To recap, artificial intelligence uses computers to mimic the human intelligence process. Although AI is not a new concept, the latest developments in computing power have turned theory into reality.

Behind the scenes, AI is nothing but linear algebra, probabilities, and some program code.

Today, AI is used in many applications across many industries. Typically, AI makes a great addition to an existing product. For example, Apple uses Siri, which is an AI-based voice control system. This system makes using Apple products even more efficient.

As a broad concept, AI has a bunch of subfields. These include:

- Machine Learning

- Deep Learning

- Neural Networks

- Cognitive computing

- Natural language processing

- Computer vision

Also, the AI programs can be classified into different types:

- Narrow AI is what all modern-day AI programs still represent.

- General AI is the level of intelligence the human brain has. This level of AI has never been achieved before

- Super AI is an AI that surpasses human brain capabilities. This level of AI could be our best friend or the greatest enemy.

Thanks for reading. I hope you found it insightful and perhaps actionable.